In the digital age, data has become a form of currency—collected, stored, traded, and analyzed for countless purposes. Facebook (now part of Meta Platforms, Inc.), as one of the world’s largest and most influential social media companies, holds a vast amount of data about its users: their names, locations, behaviors, preferences, social networks, and even thoughts expressed in posts and messages. While this data enables personalization and innovation, it also raises serious ethical concerns.

This essay explores the key ethical issues related to Facebook’s data practices, including privacy violations, lack of transparency, consent issues, algorithmic bias, manipulation, and data monetization, followed by a real-world example to illustrate the consequences of unethical data handling.

1. The Scope of Facebook’s Data Collection

To understand the ethical concerns, it’s important to first understand what Facebook collects. Data gathered includes:

- Personal information: name, age, gender, location

- Social relationships: friends, groups, followers

- Behavioral data: likes, shares, clicks, browsing activity

- Biometric data: facial recognition through photos

- Location data: via IP address, check-ins, and mobile GPS

- Communication: messages, comments, and reactions

- Off-platform activity: through Meta Pixel, plugins, and third-party integrations

Facebook’s ecosystem—spanning Messenger, Instagram, WhatsApp, and external partnerships—provides the company with unmatched access to detailed user behavior.

2. Ethical Concerns Surrounding Facebook’s Data Practices

A. Lack of Informed Consent

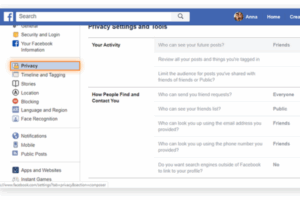

A foundational ethical concern is that many users do not fully understand what data Facebook collects or how it is used. The company’s privacy policies are often long, complex, and written in legal language.

- Users may “agree” to data sharing without understanding the implications.

- Settings are often buried in menus, discouraging people from customizing their privacy options.

- Consent is often bundled—meaning you can’t use the service unless you agree to all terms, creating an illusion of choice.

Ethical Issue: This violates the principle of informed consent, a basic standard in both medical ethics and digital data collection.

B. Privacy Violations

Privacy is a fundamental human right. Facebook has faced criticism for:

- Tracking users even when logged out or after they disable location sharing.

- Collecting data on non-users via shadow profiles.

- Allowing apps to access data about friends of users, without those friends’ knowledge or consent (a key issue in the Cambridge Analytica case).

Ethical Issue: Gathering or exposing personal data without permission violates individual autonomy and dignity.

C. Data Monetization and Exploitation

Facebook’s business model is largely based on advertising revenue, which is driven by detailed user profiling. It sells access to users’ attention by allowing advertisers to target based on deeply personal information, including:

- Race, religion, and political views

- Medical interests or concerns

- Emotional vulnerabilities

While Facebook claims it doesn’t “sell” data directly, it profits by offering paid access to targeted advertising tools.

Ethical Issue: Users are the product, not the customer. Many are unaware that their data is fueling a massive advertising ecosystem.

D. Data Breaches and Security Risks

Even if data is collected legally, it must be stored and protected securely. Facebook has suffered several high-profile data breaches:

- In 2019, data from over 530 million users was exposed online, including phone numbers and emails.

- In previous cases, passwords and private messages were also leaked.

Ethical Issue: Companies that collect massive amounts of personal data have a duty to protect it. Failing to do so puts users at risk of identity theft, harassment, and other harms.

E. Algorithmic Manipulation and Bias

Facebook’s algorithms are designed to maximize engagement, often by showing users content that aligns with their views or triggers strong emotions. This has led to:

- Echo chambers that reinforce misinformation or extremist views

- Bias in content visibility, where certain groups may be disadvantaged

- Emotion manipulation, as demonstrated by a controversial 2012 experiment in which Facebook altered users’ news feeds to study mood effects without their consent

Ethical Issue: Algorithms can unintentionally promote harm, and users are often unaware of how these systems influence their behavior or worldview.

F. Influence on Elections and Democracy

Perhaps the most controversial ethical issue has been Facebook’s role in spreading misinformation and influencing political outcomes.

- The 2016 U.S. election saw Facebook used to distribute fake news and propaganda, much of it targeted with the help of user data.

- Cambridge Analytica, a data analytics firm, used improperly obtained Facebook data to influence voter behavior through microtargeted ads.

Ethical Issue: Facebook’s data tools can be weaponized to manipulate voters, polarize public opinion, and undermine democratic processes.

G. Impact on Mental Health and Well-being

Studies have shown that excessive social media use—especially when driven by algorithmic feedback loops—can lead to:

- Depression, anxiety, and low self-esteem

- Social comparison and body image issues (especially among teens)

- Addiction-like behavior encouraged by notifications and “likes”

Ethical Issue: Platforms that collect and analyze behavioral data should not use that data in ways that negatively impact mental health, particularly when the goal is to keep users engaged for profit.

3. Case Study: The Cambridge Analytica Scandal

One of the most significant examples of ethical failure in Facebook’s data practices is the Cambridge Analytica scandal.

What happened?

- A researcher created a personality quiz app called “This Is Your Digital Life.”

- Around 270,000 users installed it, granting access to their personal data—and, critically, to the data of their Facebook friends.

- Data from up to 87 million users was harvested without their explicit consent.

- The data was sold to Cambridge Analytica, which used it to create psychological profiles for targeted political advertising, particularly in the 2016 U.S. election and the Brexit referendum.

Why it’s ethically problematic:

- Users were unaware that their data was being used for political manipulation.

- Facebook failed to prevent or properly disclose the misuse.

- The event revealed systemic weaknesses in data oversight and enforcement.

Outcome:

- Facebook’s CEO, Mark Zuckerberg, testified before U.S. Congress and the European Parliament.

- The company was fined $5 billion by the Federal Trade Commission in 2019.

- Public trust in Facebook plummeted.

- The scandal led to calls for stronger privacy laws, like the EU’s General Data Protection Regulation (GDPR).

4. Facebook’s Response and Ethical Improvements

To its credit, Facebook has taken steps to address some of these concerns, including:

- Redesigning privacy settings to be more accessible

- Launching the “Off-Facebook Activity” tool to show users how their data is used

- Implementing stricter third-party data access policies

- Expanding fact-checking programs to combat misinformation

- Increasing transparency in political ads

However, critics argue that these changes are often reactive, not proactive—and sometimes more about public relations than genuine ethical reform.

5. Broader Ethical Reflections

At the heart of these concerns is the power imbalance between Facebook and its users. Most people do not have the knowledge or tools to understand how their data is used, and even fewer have alternatives if they object.

Ethically, companies that collect and benefit from user data should adhere to the following principles:

- Transparency: Clearly explain what data is collected and how it’s used.

- Consent: Obtain meaningful, informed consent—not just blanket acceptance.

- Minimization: Collect only what is necessary and avoid overreach.

- Security: Safeguard data from unauthorized access or breaches.

- Accountability: Accept responsibility for misuse and commit to redress.

Conclusion

Facebook’s data practices raise profound ethical questions about privacy, manipulation, and the role of technology in society. While data collection enables personalized services and targeted advertising, it also opens the door to misuse—especially when users are unaware of how their information is being used or manipulated.

The Cambridge Analytica scandal, along with numerous privacy lapses and security breaches, shows how the misuse of data can have global consequences. In an era where data is as valuable as oil, ethical data stewardship is not just a moral obligation—it is essential to preserving trust, democracy, and individual autonomy.

Ultimately, users must demand greater transparency and control, and regulators must enforce stricter rules. But most importantly, companies like Facebook must recognize that ethical responsibility is not optional—it is fundamental to their role in shaping our digital future.